|

3/22/2023 0 Comments Wget options

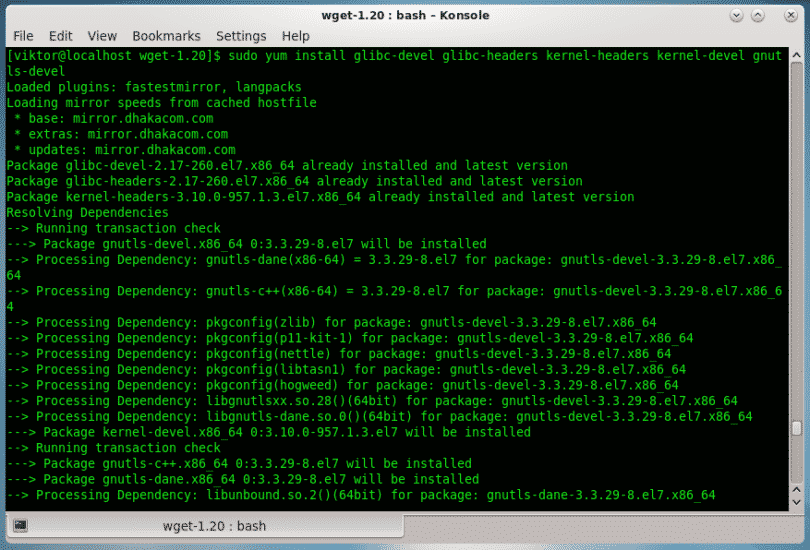

If the file is in the same directory just put the name. For example: :~$ nano files.txt Īnd to make wget able to download them all simultaneously just add the option -i and indicate the path of the text file with the links. This will download the file in the directory where we are.Ī huge advantage of wget is that we will be able to download multiple files whose links are in a text file. The most basic way to use the command is as follows: :~$ wget I assure you that it will be in the official repositories. But if not, just invoke the package manager of your distribution and install it. The first thing we need to know is that almost all Linux distributions have it installed by default. And obviously being developed by GNU, we are talking about an open-source application. Wget works with SSL/TSL to secure downloads.įinally, wget is available for many UNIX systems but also for Windows.It allows limiting the bandwidth used for downloads.Wget supports downloads through proxies.Here is a list of the main features of the application: On the other hand, although it is a program that is used in the terminal, it is quite complete.

In addition, this allows you to rename the downloaded files quickly. It is very efficient in the management of computer resources because it is practically unnoticeable its use. GNU wget is a CLI (command-line interface) utility that allows you to download files from the internet if you know its link. That is why we will explain the wget command to you. But if we use a server, how can we do it? Or if we like to use the terminal, we could download a file through the terminal? the answer is yes. And other users a little more experienced, use download managers but always with a graphical interface. Usually, this graphical interface is within the same browser. People who use their computer in a basic way, download these files using a graphical interface. It deletes the output after the execution, not as nice as quite or /dev/null 2>&1 but very powerful still.Īlso wget will work with tor, just a question of having tor proxy set up right on your server and digging for the additional commands.Downloading files from the Internet is something quite common and normal in the daily use of a computer.

Wget is a program with incredible untapped potential for most people Oh…for a min there I though wget –spider would give me all the links spidered right from the default site page. This helps (does not prevent) some attacks where a wget command is passed into an insecure web application. We then set the main wget to only be used by root. We often create a “wgetforuser” which is wget with permission that users can use. These tips are mostly for wget but curl has many of the same options. decide what level of error reporting you need and use -q -nv and/or /etc/cron.d as required. explicitly set timeouts to work with your applicationģ. use the least privileged user as possible for the user running the cron.Ģ. The problem quickly snowballs out of control.ġ. That’s 15 minutes! I’ve seen many servers with dozens of crons piled up because they are polling every 5 minutes but the server is slow, so they are waiting 10 minutes or so to get the data. Always use this option if you are fetching URLs frequently. There is also a –retry-connrefused which will retry even when a connection is refused, useful for overloaded URLs. Note the default is 20 retries unless a failure occurs. This will have wget retry in case of a failure. Lastly, don’t forget –tries=number option. If you simply need to wget a file, then a normal user with no login privileges will often suffice. Run your crons with a user with as few privileges as possible. This can be useful to alert someone in case of a problem.Īlso, don’t overlook security. You can specify a mail address within the file you place in this directory. You can also use /etc/cron.d/filename on most linux systems to fine-tune your cron. wget has a -nv flag that is not verbose but not quiet. However, we often see cases where you need some errors but not others. They will still likely show critical errors, which is why you may want the redirects to /dev/null. Wget or curl with their respective “quiet” options will silence some output from those scripts but not all.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed